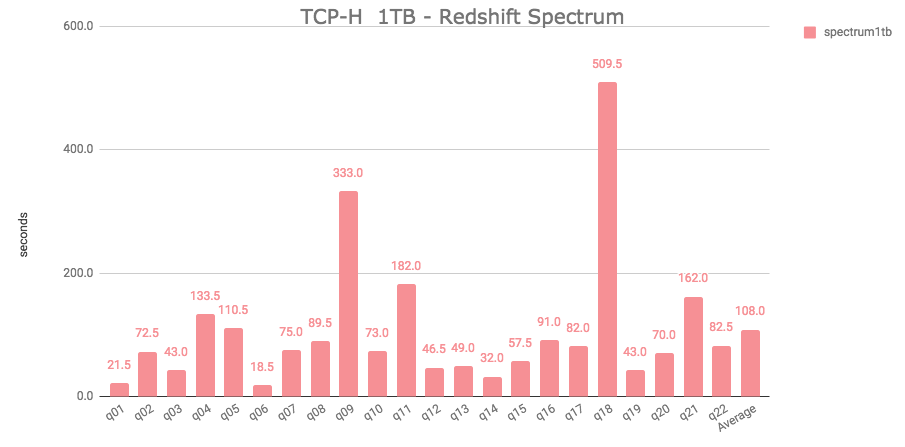

For all other node types, we'd leave the existing restrictions in place. Altough it is compatible with Redshift, it is also with Postgres. Rather than go that route, I find myself in favor of a new ra3: true | false profile config, false by default, that when true will enable features like cross-database querying. DBT is a tool to run on a Data Warehouse. Ideally, there would be an obvious metadata query to run-or better yet, a piece of information to pull off the cursor object-but after checking with the Redshift team, the query to determine node type is a bit more involved. We started discussion in the other issue about how dbt should determine its behavior. Thankfully, we already have a good sense of what this can look like in dbt-snowflake, and we can adopt it piecemeal-to my knowledge, it's still not yet to create tables/views in a different Redshift database. Load sample data into your Redshift account. It will show you how to: Set up a Redshift cluster. With the advent of cross-db querying on RA3 nodes, it's clear that we'll need to change more functionality around the runtime cache + catalog queries. In this quickstart guide, youll learn how to use dbt Cloud with Redshift. Given that, I'm actually surprised you didn't run into a compilation error earlier. If you have any more questions about Redshift and dbt, the db-redshift channel in dbt’s community Slack is a great resource. See the post for complete instructions on using this project. There are other places in the dbt-redshift adapter codebase where it takes a strong stance against the possibility of cross-database querying ( #3179). Now you have a better understanding of how to leverage Redshift sort and distribution configurations in conjunction with dbt modeling to alleviate your modeling woes. Source code for the blog post, Lakehouse Data Modeling using dbt, Amazon Redshift, Redshift Spectrum, and AWS Glue: Learn how dbt makes it easy to transform data and materialize models in a modern cloud data lakehouse built on AWS. We’ve gone from ~30 to ~1000 employees in the 3 years since we started using Looker and have learned a thing or two along the way.Great point thanks for opening the issue.  It allows you to run complex analytic queries against petabytes of structured data, using sophisticated query optimization, columnar storage. Before any of that we rolled our own tool. Amazon Redshift is a fast, fully managed, petabyte-scale data warehouse service that makes it simple and cost-effective to efficiently analyze all your data using your existing business intelligence tools. : Redshift Spectrum schema(s) where external tables are.Gen 3 is where we are now with super deliberate scoping and implementation of warehouses in DBT. dbt Integration, dbt Cloud, dbt Core, dbt Failures As Monte Carlo Incidents. Second generation involved a bunch of common table expressions within Looker itself that worked, but were developed without much thought as to data mart design. We’ve gone through 3 “generations†of Looker reporting - gen 1 was just wrapping LookML around our schema and forcing Looker to do the joins and generate SQL for everything we wanted to know. We used to do all of our transforms via DBT within Redshift but have been offloading the heavier-duty pieces (like Snowplow event processing) to Spark jobs because they were too taxing on Redshift. This package provides: Macros to create/replace external tables and refresh their partitions, using the metadata provided in your. And we’ll likely move from Redshift to Snowflake (or mayybbbeee Bigtable) in Q2 of 2020. dbt v0.15.0 added support for an external property within sources that can include information about location, partitions, and other database-specific properties. We use Snowplow for click stream analytics. In practice we have a couple of extractions that land directly in Redshift (we extract Zendesk data, for instance, with Stitch Data). It allows the user to interact directly with the data stored in Amazon S3 buckets, avoiding the need for transfer from one database to another. Airflow -> S3 -> DBT with Spark/EMR or Redshift/Spectrum -> Redshift data marts -> LookerĪt least, that’s the way we like our pipelines to work. Among Redshift’s outstanding features is Amazon Redshift Spectrum which provides comprehensive data analysis results.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed